A team of AI researchers at Facebook have published a paper earlier this week describing a technique to extract playable characters from real-world videos.

Facebook’s AI Research team has created an AI called Vid2Play that can extract playable characters from videos of real people, creating a much higher-tech version of ’80s full-motion video (FMV) games like Night Trap. The neural networks can analyze random videos of people doing specific actions, then recreate that character and action in any environment and allow you to control them with a joystick.

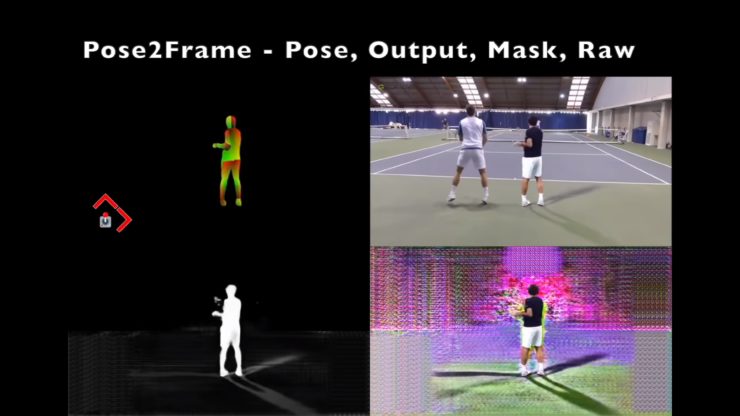

Two different neural networks were trained on the footage, just five to eight minutes in length, of someone performing a specific action, like playing tennis. The first network, Pose2Pose, analyze the footage and extracts the person who’s going through the motions. The second network, Pose2Frame, then transfers all the elements of that person, including shadows and reflections they’re creating, and then overlays it onto a new background setting, which could be a rendered video game locale.

The results aren’t quite as smooth or fluid as the detailed 3D video game characters modern consoles can generate, but they are completely controllable. As this research evolves the results will undoubtedly improve, but a hybrid approach might be even better. The AI could extract characteristics of someone in a video, including the nuances of how they move, and automatically apply them to a custom 3D character, saving players from painstakingly have to make hundreds of tweaks themselves. It won’t be useful for just video games, though, as the world moves towards more virtual reality experiences (remember, Facebook owns Oculus) it would make creating believable avatars of ourselves much easier. Your friend could shoot a smartphone video of you dancing for a few seconds, and a few minutes later you’d appear just as awkward in a virtual world.